Automating election results from local data sources

We teamed up with the Voice of OC, a non-profit, local news site in Orange County, California, to automate their live election results.

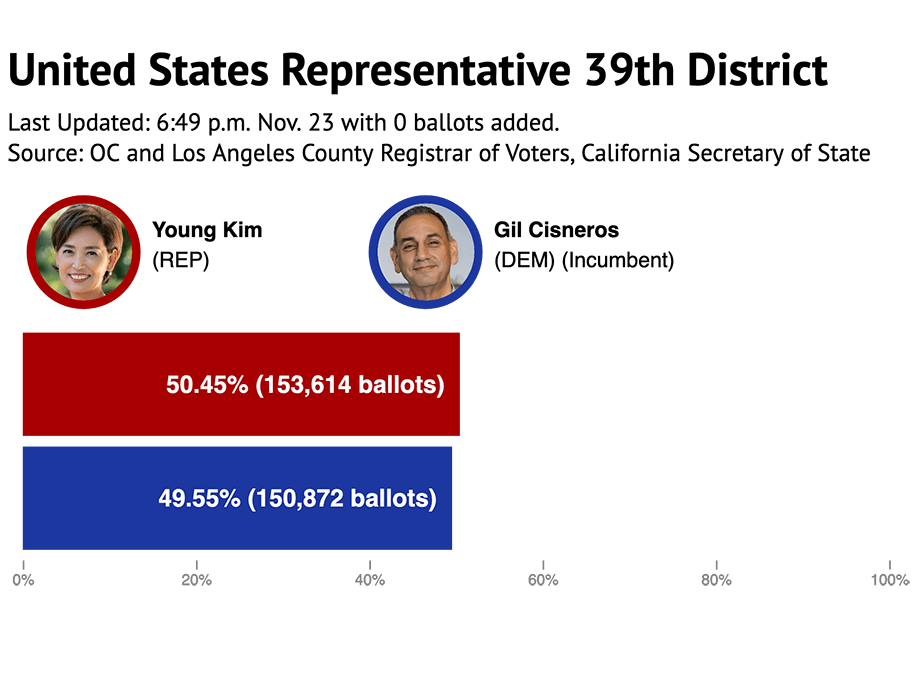

We teamed up with the Voice of OC, a non-profit, local news site in Orange County, California, to automate their live election results. By integrating data from several authorities, we provided up-to-the-minute results for every kind of race from the local up to the federal level. Here’s a look at the tight race for the 39th U.S. House District between incumbent Democrat Gil Cisneros and Republican challenger Young Kim.

In the end, we produced close to 200 dynamic graphics and we liberated the Voice of OC reporters and editors from performing any rote data entry, allowing them to cover the election’s outcomes more extensively and with greater depth.

Here’s how we did it

The front end

We wrote our chart-drawing code in an Observable notebook, relying heavily on D3.

The input for this code is a number that uniquely identifies a contest for public office or ballot measure. The output is an SVG element that scales to a wide range of screen sizes.

We then imported a single cell from our original notebook into five other notebooks wherein all the bar charts for specific categories of contests were rendered:

The cells from these notebooks were embedded throughout Voice of OC’s WordPress site as independent HTML elements and plain JavaScript that imported Observable’s Runtime.

We’ve demonstrated and written about Observable before. Literate programming environments are quickly becoming a new standard tool of data journalism practice, and Observable presents several benefits over more established alternatives like Jupyter Notebooks and R Markdown. Just to name a few:

- Observable runs in your browser, so you don’t have to download and install any software.

- Observable relies on native web technologies, so the jump from prototyping to publishing is short.

- Observable prioritizes collaboration, so you can easily share, reuse and remix your work with the work of others.

As an example of this last point: We easily repurposed the design and code for the Voice of OC graphics for colleagues covering elections here in Missouri. KOMU-TV and the Columbia Missourian each wanted live results showing data from two different data sources: The Associated Press’ API and the Missouri Secretary of State’s feed. Through the power of imports and multiplayer notebook editing, we completed these tasks in no time at all.

Many projects are simple enough to run entirely inside Observable. Though, there’s a typical sticking point: By design, Observable can fetch only from URLs set up for Cross-Origin Resource Sharing. Sadly, CORS access often isn’t enabled for public data resources, as was the case for most of the election results feeds and APIs we used. You can get around this issue by using cors-anywhere or some other proxy server, as demonstrated in this notebook and this one.

Even still, you might prefer writing and running your data pipeline code in other environments for a variety of reasons. For the Voice of OC project, we wanted to archive and thoroughly pre-process all data for our charts, and we wanted to do that work in Python.

The back end

For our data pipeline, we followed a recipe of Amazon Web Services we’ve been refining over the last couple of years. The configuration and deployment is simple: a few shell environment variables and a handful of AWS command line calls, and the infrastructure is scalable and cheap.

We archived all data in AWS Simple Storage Service. S3 is one of the most popular places to store and retrieve data on the web.

Voice of OC needed to collect election results from four available sources: Orange County, Los Angeles County and San Bernardino County Registrars of Voters and the California Secretary of State. Each of the county registrars published a single XML resource containing results for all pending contests in their jurisdiction. The California Secretary of State had a REST API for getting results (as JSON) for individual contests and individual counties.

After fetching data from each source, we wrote an unmodified copy to an S3 bucket. A bucket is a lot like the file system on your personal computer: Just a place to write, read and set access permissions to files or “objects” in AWS parlance.

For example, our latest copy of the election results published by Orange County Registrar of Voters is available here, and our latest copy of the California Secretary of State’s statewide results for the Ballot Measures is available here.

To prepare data for an individual bar chart, we needed to:

- Extract the current results of an individual contest;

- Transform these parsed results into a simplified JSON format;

- Sum up individual candidate vote and total ballot counts for contests that span multiple counties; and

- Format and finalize data for each chart.

For each step in this process, we store the output. This allows us to check our work and back track if necessary.

Our data pipeline code was written in Python, then uploaded to a function in AWS Lambda where it ran every couple of minutes.

We like Lambda because it eliminates the overhead of provisioning, configuring or load balancing servers. We also don’t have to pay for unused computing time. This approach to cloud computing is often called “serverless”, but that label is misleading. Of course there are still servers working in the background, but we aren’t reserving and managing specific machines for our own purposes. We let AWS handle all that.

Lessons learned

When polls finally close on Election Day, your news room needs as much bandwidth as possible to report out and analyze incoming results. You need to do a lot of planning and coordinating before you start coding. Below we outline our lessons learned from automating the Voice of OC election results.

Start early

Automation and planning go hand-in-hand. Expect to spend more time and effort automating live election results than you would otherwise spend keeping up your current editorial processes. The payoff is not about doing less work, but rather creating opportunities to do even more ambitious and interesting work.

Inspect and access your source data

State election authorities—and many local ones—start releasing “unofficial” results immediately after polls close on Election Day. As early as possible, contact the offices of the authorities in your coverage area, and ask how to access these results in whichever machine-readable format they’re available.

Simulate the results

Early access to the test feeds and APIs of state and local election authorities will reveal the structure and format of pending results. However, the actual vote and ballot counts will remain at zero until polls close on Election Day.

It’s difficult to design around data that doesn’t exist yet. Working with simulated vote and ballot counts will at least give you and your team a chance to anticipate the flow of the data pipeline, validate key steps of the process and visualize your graphical output as it will appear when real results are released.