Need for speed 2: Newspaper data diving, metrics and methodologies

Welcome to the weeds, fellow bit-twisters and data divers. We can chat here without worrying about the numeracy nonbelievers. This post details the methodologies used in “Need for speed 1: Newspaper load times give ‘slow news days’ new meaning.”

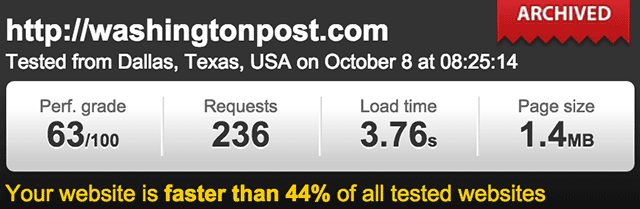

First, you and I both know “load time” is a fickle metric, completely dependent on the user’s connection speed and location. These screenshots of Pingdom Website Speed Test results show load times for The Washington Post: first, 3.76 seconds from Dallas; a minute later, 16.46 seconds from San Jose, just a few states away.

I hope the number of results in this report (1,455 newspapers) smooths out these variances enough to approach an overall average of actual load times. But maybe not. So, as with the Tools We Use reports, consider the data herein more generally informative than statistically precise.

Methodology

The steps to improve the accuracy of my results include:

- Rerunning tests for 100-plus sites with greater than 50-second load times (exceeding two standard deviations), which returned more reasonable results for 95 percent of those sites.

- Visually inspecting all sites with less than four-second load times and deleting any not really news sites (loosely defined as regularly updated linked lists of news articles and sections).

Perhaps I can divert you from my data deficiencies with some eye candy:

Note the nice funnels of correlation for requests and page weight (at the top). I expected PageSpeed scores, Alexa rank and other factors to also correlate well. None did.

So I grabbed a bunch of other data, including bounce rates, DOM elements and monthly visits. But requests and page weight remained the only factors I found in lock-step with load times:

| Correlation with | Averages | |||

|---|---|---|---|---|

| Load time | Speed index | Mean | Median | |

| Load time | — | 0.663 | 16.7s | 13.0s |

| Requests | 0.740 | 0.540 | 281 | 228 |

| Page weight (MB) | 0.683 | 0.461 | 4.7MB | 3.9MB |

| Speed index | 0.663 | — | 5.9s | 6.4s |

| Widgets | 0.345 | 0.250 | 256 | 259 |

| Desktop score | -0.274 | -0.171 | 52/100 | 54/100 |

| Mobile score | -0.224 | -0.188 | 45/100 | 45/100 |

| Mobile UX | 0.199 | 0.164 | 94/100 | 99/100 |

| DOM Elements | 0.074 | 0.125 | 1594 | 1577 |

| Rank | -0.071 | -0.069 | 733,473 | 311,108 |

| Bounce rate | -0.014 | -0.063 | 56.6% | 57.4% |

| Circulation | 0.004 | 0.040 | 90,605 | 23,592 |

| Pages-per-session | 0.004 | 0.115 | 2.9 | 2.5 |

| Visits/mo. | -0.006 | 0.030 | 627,872 | 45,000 |

I now have a sea of data I’m just starting to wade through. I’ll be looking at other load time correlations, like with CMS and servers. If you have suggestions, please comment below.

Sources

The newspaper data came mostly from API calls to:

- WebPagetest.org for load times, speed index, requests, page weight and DOM elements.

- Google PageSpeed Insights for desktop, mobile, and mobile UX scores.

- SimilarWeb for bounce rate, visits and pages-per-session.

- Alexa for the global site ranking.

- BuiltWith and W3Techs for CMS, server and widgets.

The “Tools We Use 1“ methodologies section details how we compiled the list of newspapers and identified their CMS and servers. The URL tested for all results was the newspaper’s homepage. The WebPagetest setting used using Chrome from Dulles, Virginia, simulating cable bandwidth.

Averages

In the top table of “Need for speed 1,” the “U.S newspapers“ averages are of all results for load times, requests and page weights (from WebPagetest.org), and of desktop scores (from Google PageSpeed Insights).

The averages of “All sites” for requests, page weights and desktop scores are from the HTTP Archives (September 2015). Average load time for all sites is a slippery statistic. To get close I used the admittedly shaky method of determining the seconds at which Pingdom Tools switches from reporting, “Your site is slower than X% of sites” to “Your site is faster than. …” That happened at about 5.3 seconds.

And noting way down here where no one will notice: “Widgets” isn’t really only widgets, it’s everything BuiltWith calls a technology, from a plugin for WordPress or jQuery, to WordPress and jQuery itself (so the total is proportional to the number of requests). I included this factor only because it was one of the few with decent correlation to load time.

Thanks to BuiltWith for donating an account to RJI for this project. Thanks to Michael Jenner and Randy Picht for direction, and to Harsh Taneja for deviations (of the standard kind). The top image comes from the University of Missouri yearbook Savitar, 1956.

I’ll leave you with one last data dump:

| Load time (seconds) | Requests | Page weight (MB) | ||||

|---|---|---|---|---|---|---|

| Mean | Median | Mean | Median | Mean | Median | |

| All sites | 16.7 | 13.0 | 281 | 287 | 4.7 | 3.9 |

| By CMS: | ||||||

| Drupal | 13.8 | 11.1 | 218 | 213 | 3.9 | 3.4 |

| WordPress | 15.5 | 12.4 | 194 | 152 | 6.0 | 4.0 |

| BLOX CMS | 17.6 | 13.9 | 348 | 295 | 5.5 | 4.7 |

| By Server: | ||||||

| Apache | 13.5 | 10.4 | 192 | 154 | 3.8 | 3.0 |

| Nginx | 16.0 | 12.5 | 235 | 224 | 5.0 | 3.6 |

| IIS | 22.2 | 20.0 | 391 | 317 | 5.2 | 4.9 |