‘Do people even read captions?’

Why it’s important to test what we THINK we know about mediaYes, people read captions. They’re some of the most scannable, well-read elements in news media. So how can we make them truly worthwhile? It’s time to test.

Why is it important to test what we think we already know about how people read the words that accompany an image and view those images? My friend and colleague, Dave Stanton says it well. He’s a developer, researcher and strategist who has guided me through any number of research ventures.

“While there are some common ways we all think, so much of how a person understands a message is based on their experiences both as an individual and through shared cultural norms,” he wrote. “This is even more important with visual design and storytelling where pattern recognition is a primary means of understanding.”

As we’ve shared before, we’ve done eye-tracking studies to measure the impact of quality photojournalism. Now we’re ready for a little deeper research on photo captions. With Sue Morrow and Akili Ramsess from the National Press Photographers Association, my University of Minnesota colleague Regina McCombs and half a dozen more experts on speed dial, I’m going to analyze four different formats for photo captions.

Research needs a fine focus in order to help pinpoint actionable steps to improving a process.

Captions are often the most read item in a news publication. Yet we have never studied them to define which qualities lead to better comprehension, retention and even feelings of trust.

With the help of this fellowship and the generosity of Visura co-founders Adriana Teresa Letorney and Graham Letorney, I have created a custom testing site for photojournalism and visual projects. The site is easy to navigate and populate. All testing can be done remotely and it pulls the data directly into a Google spreadsheet, which can then be easily analyzed. (Bingo!) My caption study will begin on March 1, 2021.

Here is what the process will be:

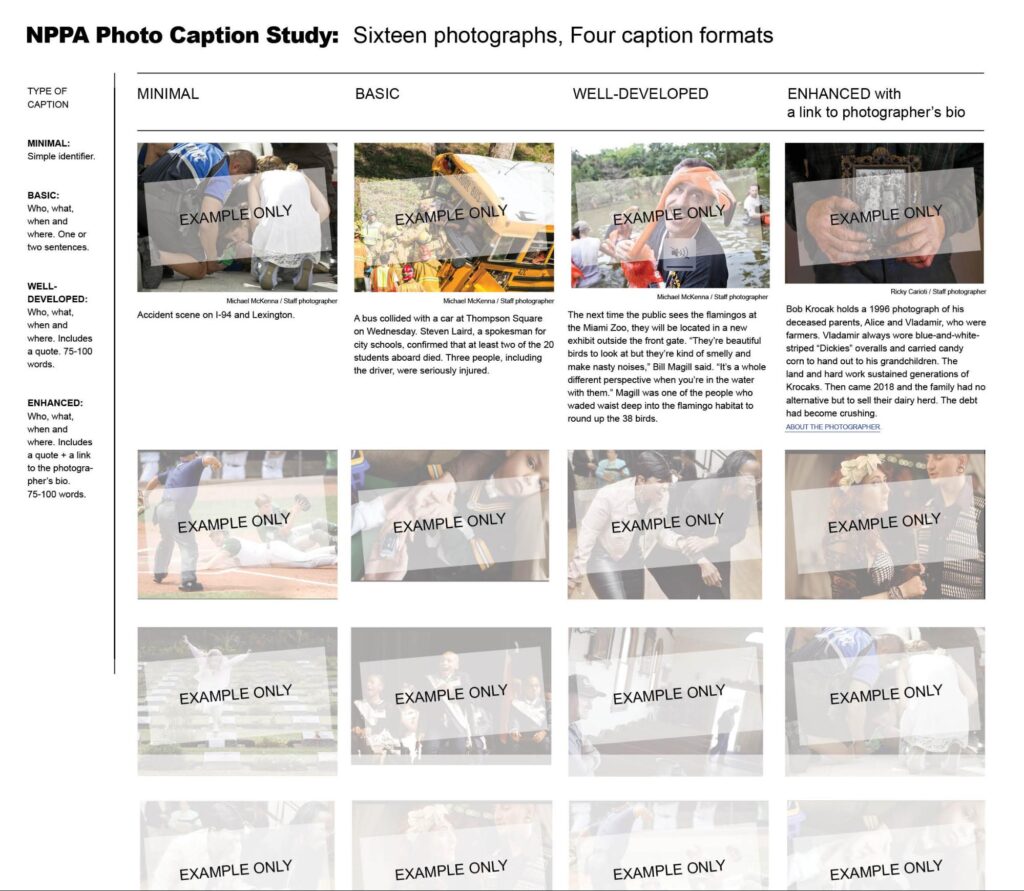

I will test four types of photo caption formats that we’ve established for minimal, basic, well-developed and enhanced.

- Participants will view 16 photographs: four of each with the different caption formats.

- Participants will then answer a series of questions about each photo. Questions will be geared toward assessing comprehension of the story, retention of information, likelihood of sharing the photo, perception of quality and level of trust.

Definition for each of the four caption formats:

- Minimal: Simple identifier. One sentence.

- Basic: Who, what, when and where. One or two sentences, 25-40 words

- Developed: Who, what, when, where, why. Includes a quote. 75-100 words.

- Enhanced: Who, what, when, where, why. Includes a quote. 75-100 words. Plus a link to the photographer’s bio.

The testing photos are being selected and captions are being created by a team of visual editors including NPPA leaders Akili Ramsess and Sue Morrow; Star Tribune Director of Photography and Multimedia Deb Pastner; Star Tribune Senior Photo Editor Cheryl Diaz Meyer, creative director and editor Kris Viesselman and University of Minnesota educator Regina McCombs.

Staying consistent with my earlier studies, we’ll test two age groups:

- Digital Natives: people 18-35 years old, (born into a digital world).

- Print Nets: people 45-70 years old, (one foot in the print world, one foot in the digital world).

Research tells us that it’s less important to test people in the ages between these two groups because about half of them tend to report in one of the two results. The goal of this study is to create data that informs the choices journalists make when writing captions. This will help us write captions that better inform and build trust with our communities.

Since the testing can be done remotely, I am making it available to various universities and organizations, like Al Tompkins’ MediaWise for Seniors project at Poynter, and the Sona Subject pool at the University of Minnesota, which has about 450 students this semester. The Sona pool is a great resource for researchers and grad students who wish to use human subjects in their work. Students can participate in studies to earn extra credit in their courses, and researchers have access to a large pool of undergraduate subjects.

Once we have results from 100 people in each age group, I will work with my research partner, Dave Stanton and with Valerie Belair-Gagnon at the Minnesota Journalism Research Center to analyze the data.

While the testing site is being refined, the URL is private. But I will use this new, easy-to-use research tool for multiple studies, making it available to others to gather information about visual media. It might be used, for example, to look at lower-thirds, over-the-shoulder (OTS) images or screen crawling data on newscasts. My subsequent studies will look for insight into variations on web video or the way stock images are perceived.

I think this research tool was well worth the investment and the time it’s taking to develop.

The results of the caption survey, along with the bulk of my earlier research on how people view and react to news media will be published on my new site that will be up and running in March 2021.

If you would like to get involved or be added to my mailing list, please let me know at saraquinnmedia@gmail.com. I will also be looking for candidates to take the caption survey — particularly in the age groups 18-35 years and 45 to 70 years.